🌐 Technologies Likely to Shape a Future World War — and the Geopolitical Lessons We Ignore at Our Peril

If a future global conflict were to erupt, it would not resemble the industrial wars of the 20th century. There would be no single “front line,” no clear declaration, and no neat separation between civilian and military domains. The battlefield would be everywhere: networks, space, supply chains, information ecosystems, and economies.

Modern great-power competition suggests that any large-scale conflict would be defined less by massed armies and more by technology-enabled disruption. Understanding these technologies is not about glorifying war—it is about recognizing how power, deterrence, and instability now operate.

1) Artificial Intelligence as the Nervous System of War

AI will not “decide” wars on its own, but it will compress decision time to a level humans struggle to match.

Likely roles

Intelligence analysis at machine speed

Pattern recognition across vast sensor networks

Decision-support for commanders under time pressure

Strategic reality

The side that integrates AI without surrendering human judgment gains an advantage. The side that over-automates risks catastrophic escalation through misinterpretation.

Lesson

Speed without restraint increases the risk of accidental war.

2) Cyber Warfare as a Primary Opening Move

Cyber operations have already become a normalized instrument of state power. In a future world war, cyber actions would likely precede any kinetic violence.

Targets

Power grids and energy distribution

Financial systems and payment rails

Communications and logistics platforms

Geopolitical lesson

Cyber conflict blurs the line between war and peace. Attribution is slow, retaliation is ambiguous, and escalation ladders are unclear.

Lesson

In cyber space, ambiguity is a weapon—and also a liability.

3) Autonomous and Semi-Autonomous Weapons Systems

Uncrewed systems—air, sea, and land—will dominate tactical environments. Their importance lies not just in lethality, but in scalability and deniability.

Characteristics

Low cost compared to traditional platforms

High saturation potential

Reduced political cost of deployment

Strategic concern

When machines can select and engage targets with minimal human input, accountability dissolves.

Lesson

When responsibility is diluted, restraint erodes.

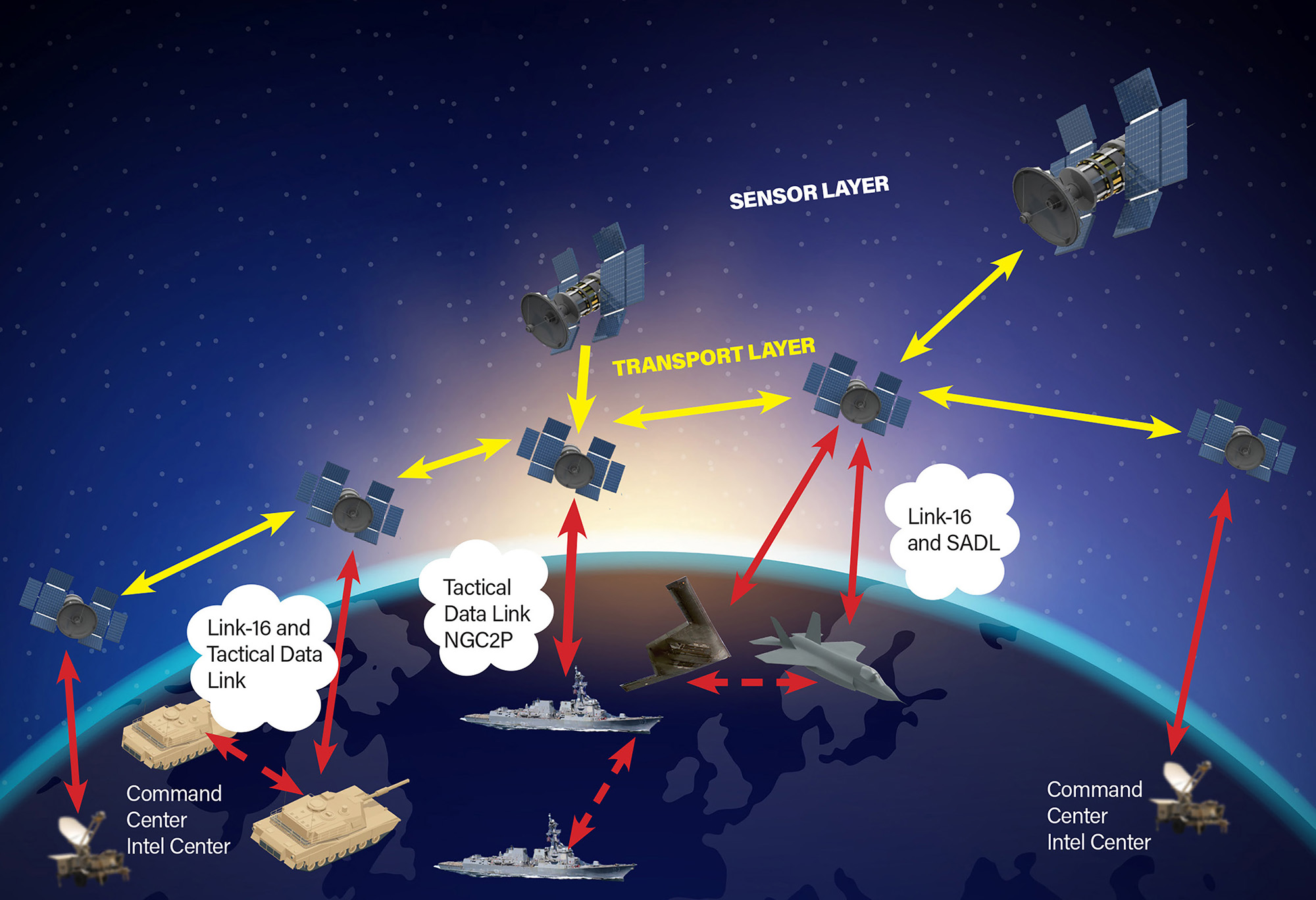

4) Space as a Contested Warfighting Domain

Modern societies depend on satellites for:

Navigation

Communications

Weather forecasting

Financial timing

Disrupting space infrastructure would paralyze civilian life without firing a shot on land.

Geopolitical risk

Anti-satellite actions create debris that endangers all actors, including neutral states.

Lesson

Space warfare punishes the attacker almost as much as the target.

5) Information Warfare and Cognitive Attacks

Future wars will be fought inside human perception.

Techniques

AI-generated misinformation

Deepfakes undermining trust

Algorithmic amplification of social fractures

Strategic objective

Not to convince everyone—but to ensure no one trusts anything.

Lesson

A society that cannot agree on reality cannot coordinate defense.

6) Economic and Supply-Chain Warfare

Economic coercion is already a preferred tool of statecraft.

Weapons

Sanctions

Export controls (especially semiconductors)

Energy leverage

Maritime chokepoints

Reality

Wars can now be lost without battlefield defeat—through industrial exhaustion.

Lesson

Industrial resilience is national security.

7) Biotechnology and Dual-Use Research Risks

Advances in biotechnology bring enormous medical promise—but also strategic risk.

Concern

Dual-use research lowers barriers to misuse, whether intentional or accidental.

Lesson

The most dangerous technologies are those that do not look like weapons.

The Core Geopolitical Lessons

1️⃣ Deterrence Is Now Multidomain

Military strength alone is insufficient. States must deter across cyber, economic, informational, and space domains simultaneously.

2️⃣ Escalation Will Be Fast—and Hard to Control

AI-accelerated decision cycles reduce the window for diplomacy.

3️⃣ Civilian Infrastructure Is the New Center of Gravity

Power grids, data centers, and trust in institutions matter more than tank counts.

4️⃣ Alliances Matter More Than Ever

No state can secure all domains alone. Fragmented alliances invite coercion.

A Final, Uncomfortable Truth

A future world war would not announce itself. It would emerge gradually, normalized step by step, disguised as “competition,” “retaliation,” or “defensive measures.”

Technology does not make war inevitable—but it lowers the threshold for catastrophic mistakes.

The greatest risk of advanced warfare is not malicious intent,

but miscalculation at machine speed.

📚 References & Further Reading

World Economic Forum – Global Risks Report

RAND Corporation – AI and Future Warfare

Center for Strategic and International Studies – Cyber & Space Security

NATO – Emerging and Disruptive Technologies

Stanford University – AI, Conflict, and International Security